If you are building AI apps on WhatsApp, you face a critical risk: model manipulation. Without protection, users can attempt to deceive the agent with hidden instructions (prompt injection), force the disclosure of confidential information, or send inappropriate content.

In the 2026 transactional economy, intelligence without security is a financial vulnerability. That is why at Jelou, we have engineered an infrastructure where trust is built into the code.

Model Armor: One-Click Protection

Model Armor is the advanced security layer activated directly from the AI Agent node in Brain Studio. Once enabled, the system analyzes every incoming message in milliseconds before the model processes it.

This is not a simple word filter; it is an infrastructure-level function that proactively blocks manipulation attacks and prevents sensitive data leaks.

Security Levels: From Basic Validation to Critical Compliance

We understand that every operation has a different risk profile. Therefore, Model Armor allows you to toggle between four granular protection levels:

Low Level: Basic input validation with minimal security filtering.

Medium Level (Recommended): Includes standard filtering and prompt injection detection. This is the ideal starting point for most operational flows.

High Level: Comprehensive threat analysis and strict content moderation for high-visibility interactions.

Critical Level: Provides maximum protection against data leaks and sensitive content detection. It generates full audit logs, a technical requirement for compliance processes and external audits.

Upon activation, the system automatically starts at the Medium level, allowing teams to scale protection based on the sensitivity of the use case.

Use Cases: Agentic Security in Production

The difference between a demo and a real system is the ability to operate under attack. Model Armor solves industry-specific challenges:

Fintech & Banking: Prevents malicious users from "tricking" the agent into revealing internal business rules, system prompts, or third-party data. With High or Critical levels, manipulation attempts are blocked before they compromise system integrity.

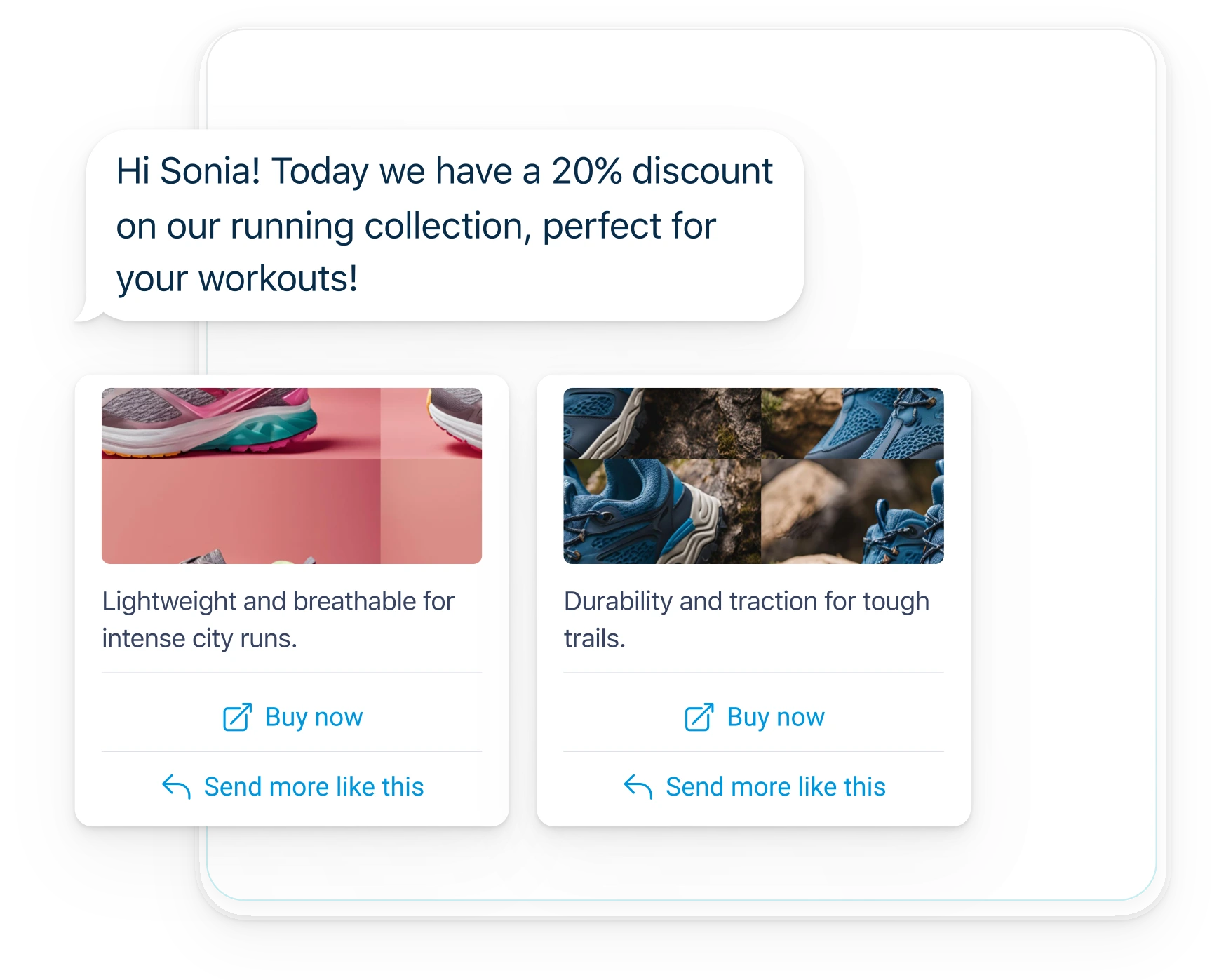

Retail & E-commerce: Protects the customer experience by automatically filtering offensive or out-of-scope messages, allowing the sales agent to stay focused on conversion without requiring manual filtering logic.

Regulated Industries (Health/Legal): Facilitates compliance demonstration for regulators. By activating the Critical Level, companies gain full traceability of every filtered interaction, ensuring no sensitive data leaves the controlled environment.

Who is Model Armor for?

Enterprise Security Teams: Requiring technical guarantees that the agent cannot be manipulated by malicious actors.

Banking & Fintech Product Managers: Needing traceability and regulatory compliance (ISO/PCI).

CX Leaders: Looking for agents that operate exclusively within the business scope without operational noise.

Proven Infrastructure, Not Experiments

Deploying AI on WhatsApp is not about "chatting"—it is about orchestrating transactions with integrity. At Jelou, our infrastructure has already processed over $120 million under the strictest international standards: ISO 27001:2022 and PCI DSS v4.0.1.

By activating Model Armor, you elevate your AI application from a prototype to a banking-grade solution, ensuring every agent always acts within your business logic and global regulations.

Are you ready to shield your WhatsApp AI operation?

Activate Model Armor in Brain Studio (Free Trial)